Where Technology Meets Insight

Explore the latest in computing, hardware innovations, and digital trends. Incinerateurdejardin delivers in-depth articles, expert analyses, and breaking tech news to keep you informed and ahead of the curve.

Explore Tech Topics That Matter

Navigate through comprehensive guides and breaking stories across all computing sectors

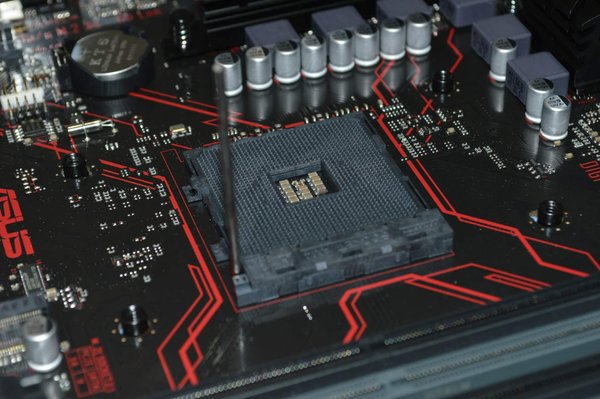

hardware

Components, PCs and peripherals

View articles →High tech

Innovations and new technologies

View articles →Internet

Web, social media and online services

View articles →marketing

Digital marketing and SEO

View articles →News

Tech and computing news

View articles →smartphones

Phones, tablets and apps

View articles →Video games

Video games, consoles and gaming

View articles →

Why Tech Enthusiasts Choose Our Platform

We deliver comprehensive coverage of the computing world, from cutting-edge hardware reviews to emerging internet trends and gaming breakthroughs.

Explore →Latest articles

Our recent publications

Harness the power of ai seo tools to elevate your content

AI-powered SEO tools are transforming how content ranks and engages readers. Using these tools strategically boosts keyw...

How a digital agency can elevate your casino gaming brand

Partnering with a digital agency transforms your casino gaming brand by blending creativity, AI, and data-driven strateg...

Mastering twitter scraping: tools and techniques uncovered

Unlocking the potential of Twitter scraping fundamentally transforms data analysis and marketing strategies. With the ri...

Transform your ideas into engaging videos with templates

Ready to turn your creative ideas into captivating videos? Discover how easy it is with pre-designed templates that cate...

Maximize online privacy with residential proxies today

Online privacy is more important than ever. Residential proxies can provide a crucial layer of protection in an age wher...

How do you set up a high-performance gaming rig with an AMD Ryzen 9 5900X and an RTX 3080?

...

What are the best methods for achieving low-latency streaming with an Elgato 4K60 Pro on a custom-built PC?

...

What are the detailed steps to configure a secure file-sharing setup using FreeNAS on a custom-built PC?

...

How can AI be used to improve the precision of environmental monitoring systems?

...

How can augmented reality be used to improve remote technical support for industrial equipment?

...

What are the steps to develop a secure and efficient AI-powered risk management platform?

...

What are the best practices for integrating SSO (Single Sign-On) in an enterprise environment?

...

What are the steps to configure a highly available PostgreSQL cluster using Patroni?

...

What are the steps to create a chatbot using Google's Dialogflow and Node.js?

...

How can UK-based non-profits use digital storytelling to engage with potential donors?

...

How to develop an omni-channel retail strategy for UK's boutique fashion brands?

...

What are the best practices for integrating AI into UK's agriculture technology solutions?

...

How Can AI Assist UK Travel Agencies in Personalizing Vacation Packages?

...

How Can UK Sports Organizations Use AI for Performance Analysis and Injury Prevention?

...

What Are the Best Practices for Implementing AI in UK Legal Document Review?

...

How to Use Your Smartphone to Monitor and Control a Smart Home Water Heater?

...

What Are the Best Strategies for Using Your Smartphone to Manage Household Chores?

...

What Are the Steps to Set Up a Virtual Health Monitoring System Using Your Smartphone?

...

How can AI be used to create dynamic and adaptive enemy AI in stealth action games?

...

How can developers use AI to generate lifelike NPC reactions in social simulation games?

...

What are the effective methods for creating realistic foliage and vegetation in forest exploration games?

...

Start Your Tech Journey Today

Dive into hundreds of articles covering every corner of the computing world. From beginner guides to advanced technical analyses, discover content that matches your expertise level and interests.

Rejoindre →